Ethernet flow control is a mechanism for temporarily stopping the transmission of data on Ethernet family computer networks. The goal of this mechanism is to avoid packet loss in the presence of network congestion.

The first flow control mechanism, the pause frame, was defined by the IEEE 802.3x standard. The follow-on priority-based flow control, as defined in the IEEE 802.1Qbb standard, provides a link-level flow control mechanism that can be controlled independently for each class of service (CoS), as defined by IEEE P802.1p and is applicable to data center bridging (DCB) networks, and to allow for prioritization of voice over IP (VoIP), video over IP, and database synchronization traffic over default data traffic and bulk file transfers.

Description

editA sending station (computer or network switch) may be transmitting data faster than the other end of the link can accept it. Using flow control, the receiving station can signal the sender requesting suspension of transmissions until the receiver catches up. Flow control on Ethernet can be implemented at the data link layer.

The first flow control mechanism, the pause frame, was defined by the Institute of Electrical and Electronics Engineers (IEEE) task force that defined full duplex Ethernet link segments. The IEEE standard 802.3x was issued in 1997.[1]

Pause frame

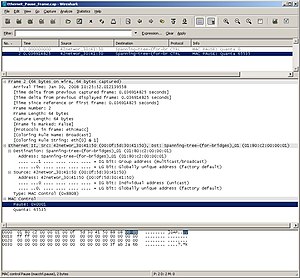

editAn overwhelmed network node can send a pause frame, which halts the transmission of the sender for a specified period of time. A media access control (MAC) frame (EtherType 0x8808) is used to carry the pause command, with the Control opcode set to 0x0001 (hexadecimal).[1] Only stations configured for full-duplex operation may send pause frames. When a station wishes to pause the other end of a link, it sends a pause frame to either the unique 48-bit destination address of this link or to the 48-bit reserved multicast address of 01-80-C2-00-00-01.[2]: Annex 31B.3.3 The use of a well-known address makes it unnecessary for a station to discover and store the address of the station at the other end of the link.

Another advantage of using this multicast address arises from the use of flow control between network switches. The particular multicast address used is selected from a range of address which have been reserved by the IEEE 802.1D standard which specifies the operation of switches used for bridging. Normally, a frame with a multicast destination sent to a switch will be forwarded out to all other ports of the switch. However, this range of multicast address is special and will not be forwarded by an 802.1D-compliant switch. Instead, frames sent to this range are understood to be frames meant to be acted upon only within the switch.

A pause frame includes the period of pause time being requested, in the form of a two-byte (16-bit), unsigned integer (0 through 65535). This number is the requested duration of the pause. The pause time is measured in units of pause quanta, where each quanta is equal to 512 bit times.

By 1999, several vendors supported receiving pause frames, but fewer implemented sending them.[3][4]

Issues

editOne original motivation for the pause frame was to handle network interface controllers (NICs) that did not have enough buffering to handle full-speed reception. This problem is not as common with advances in bus speeds and memory sizes. A more likely scenario is network congestion within a switch. For example, a flow can come into a switch on a higher speed link than the one it goes out, or several flows can come in over two or more links that total more than an output link's bandwidth. These will eventually exhaust any amount of buffering in the switch. However, blocking the sending link will cause all flows over that link to be delayed, even those that are not causing any congestion. This situation is a case of head-of-line (HOL) blocking, and can happen more often in core network switches due to the large numbers of flows generally being aggregated. Many switches use a technique called virtual output queues to eliminate the HOL blocking internally, so will never send pause frames.[4]

Subsequent efforts

editCongestion management

editAnother effort began in March 2004, and in May 2004 it became the IEEE P802.3ar Congestion Management Task Force. In May 2006, the objectives of the task force were revised to specify a mechanism to limit the transmitted data rate at about 1% granularity. The request was withdrawn and the task force was disbanded in 2008.[5]

Priority flow control

editEthernet flow control disturbs the Ethernet class of service (defined in IEEE 802.1p), as the data of all priorities are stopped to clear the existing buffers which might also consist of low-priority data. As a remedy to this problem, Cisco Systems defined their own priority flow control extension to the standard protocol. This mechanism uses 14 bytes of the 42-byte padding in a regular pause frame. The MAC control opcode for a Priority pause frame is 0x0101. Unlike the original pause, Priority pause indicates the pause time in quanta for each of eight priority classes separately.[6] The extension was subsequently standardized by the Priority-based Flow Control (PFC) project authorized on March 27, 2008, as IEEE 802.1Qbb.[7] Draft 2.3 was proposed on June 7, 2010. Claudio DeSanti of Cisco was editor.[8] The effort was part of the data center bridging task group, which developed Fibre Channel over Ethernet.[9]

See also

editReferences

edit- ^ a b IEEE Standards for Local and Metropolitan Area Networks: Supplements to Carrier Sense Multiple Access with Collision Detection (CSMA/CD) Access Method and Physical Layer Specifications - Specification for 802.3 Full Duplex Operation and Physical Layer Specification for 100 Mb/S Operation on Two Pairs of Category 3 or Better Balanced Twisted Pair Cable (100BASE-T2). Institute of Electrical and Electronics Engineers. 1997. doi:10.1109/IEEESTD.1997.95611. ISBN 978-1-55937-905-2. Archived from the original on July 13, 2012.

- ^ IEEE Standard for Ethernet (PDF). IEEE Standards Association. 2018-08-31. doi:10.1109/IEEESTD.2018.8457469. ISBN 978-1-5044-5090-4. Retrieved 2022-11-29.

{{cite book}}:|website=ignored (help)[dead link] - ^ Ann Sullivan; Greg Kilmartin; Scott Hamilton (September 13, 1999). "Switch Vendors pass interoperability tests". Network World. pp. 81–82. Retrieved May 10, 2011.

- ^ a b "Vendors on flow control". Network World Fusion. September 13, 1999. Archived from the original on 2012-02-07. Vendor comments on flow control in the 1999 test.

- ^ "IEEE P802.3ar Congestion Management Task Force". December 18, 2008. Retrieved May 10, 2011.

- ^ "Priority Flow Control: Build Reliable Layer 2 Infrastructure" (PDF). White Paper. Cisco Systems. June 2009. Retrieved May 10, 2011.

- ^ IEEE 802.1Qbb

- ^ "IEEE 802.1Q Priority-based Flow Control". Institute of Electrical and Electronics Engineers. June 7, 2010. Retrieved May 10, 2011.

- ^ "Data Center Bridging Task Group". Institute of Electrical and Electronics Engineers. June 7, 2010. Retrieved May 10, 2011.

External links

edit- "Ethernet Media Access Control - PAUSE Frames". TechFest Ethernet Technical Summary. 1999. Archived from the original on 2012-02-04. Retrieved May 10, 2011.

- Tim Higgins (November 7, 2007). "When Flow Control is not a Good Thing". Small Net Builder. Retrieved January 6, 2020.

- Linux Tool for generating flow control PAUSE frames Archived 2012-05-24 at the Wayback Machine

- Python Tool to Generate PFC Frames

- "Ethernet Flow Control". Topics in High-Performance Messaging. Archived from the original on 2007-12-08.